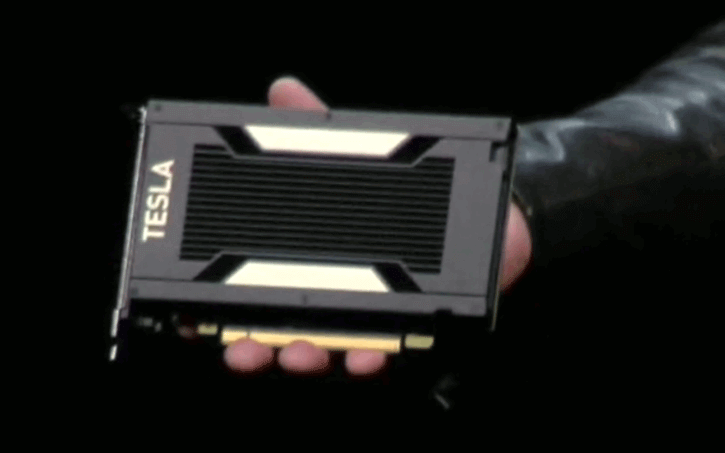

Over at GDC Nvidia, today reported the Tesla Volta V100 processor, this is a Volta GPU based on Tensor architecture. Tesla Volta V100 will be manufactured on TSMC’s 12nm finfet handle, driving the breaking points of photo lithography as this GPU is huge.

The Tesla Volta V100 graphics processor has 5,120 CUDA / Shader cores and is based upon an incredible 21 Billion transistors. It offers what Nvidia calls 120 Tensor TeraFLOPS of performance. Gaming wise it would perform in the 15 TFLOP (fp32) region, delivered by a new type of architecture called Tensor cores. The R&D behind this did cost Nvidia many years and about $3 billion worth in investments, CEO JHH stated in his keynote.

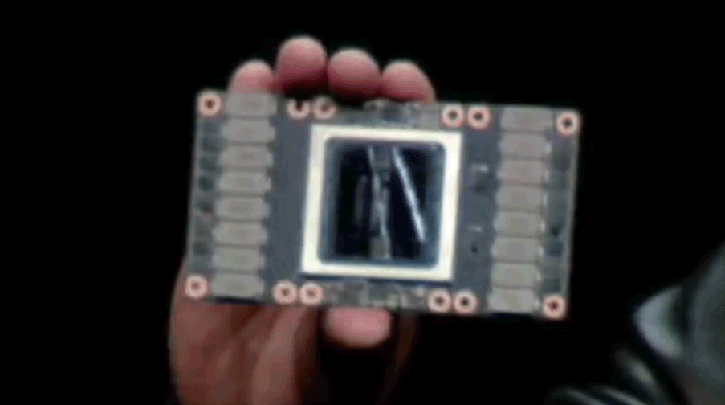

However this GPU is not going into the GTX line, rather its for the server and deap learning Tesla lineup.The new Tensor Core is based on a 4×4 matrix array and fully optimized for deep learning. Nvidia stated, they felt Pascal is fast, but isn’t fast enough. The GPU is huge, it’s 815mm2 huge and would fit roughly the palm of your hand.

- Massive 815mm2 die size

- 12nm FinFet (TSMC)

- 21B Transistors

- 15 FP32 TFLOPS / 7.5 FP64 TFLOPS

- 120 Tensor TFLOPS

- 16GB HBM2 which manages @ 900 GB/s

- 5120 Shader processor cores

Tesla Volta V100 is capable of pushing 15 FP32 TFLOPS and much like Pascal GP100 is once again tied towards 4096-bit HBM2 graphics memory (stacked on-die cache). The unit will get 16GB of it divided over four stacks (= 4GB per stack). The memory is fabbed by Samsung. That HUGE die at 815 mm2 is fabbed by TSMC on a 12nm FFN fabrication process. In Q3 you will see the first enterprise based products based on Volta that start at 69.000 dollar. For us gamers, when GeForce GTX 1180 or 2080 will be released. That remains to be topic of a long discussion. Below a comparative specification list of the primary Tesla GPUs running up-to Volta, which runs in the 5120 shader processors at the 1.45 GHz marker for Boost frequency btw. It’ll have 320 Texture Units, sheesh.

| Nvidia Tesla Lineup | ||||

|---|---|---|---|---|

| Tesla K40 | Tesla M40 | Tesla P100 | Tesla V100 | |

| GPU | GK110 (Kepler) | GM200 (Maxwell) | GP100 (Pascal) | GV100 (Volta) |

| SMs | 15 | 24 | 56 | 80 |

| TPCs | 15 | 24 | 28 | 40 |

| FP32 Cores / SM | 192 | 128 | 64 | 64 |

| FP32 Cores / GPU | 2880 | 3072 | 3584 | 5120 |

| FP64 Cores / SM | 64 | 4 | 32 | 32 |

| FP64 Cores / GPU | 960 | 96 | 1792 | 2560 |

| Tensor Cores / SM | n/a | n/a | n/a | 8 |

| Tensor Cores / GPU | n/a | n/a | n/a | 640 |

| GPU Boost Clock | 810/875 MHz | 1114 MHz | 1480 MHz | 1455 MHz |

| Peak FP32 TFLOP/s | 5.04 | 6.8 | 10.6 | 15 |

| Peak FP64 TFLOP/s | 1.68 | 2.1 | 5.3 | 7.5 |

| Peak Tensor Core TFLOP/s | n/a | n/a | n/a | 120 |

| Texture Units | 240 | 192 | 224 | 320 |

| Memory Interface | 384-bit GDDR5 | 384-bit GDDR5 | 4096-bit HBM2 | 4096-bit HBM2 |

| Memory Size | Up to 12 GB | Up to 24 GB | 16 GB | 16 GB |

| L2 Cache Size | 1536 KB | 3072 KB | 4096 KB | 6144 KB |

| Shared Memory Size / SM | 16 KB/32 KB/48 KB | 96 KB | 64 KB | Configurable up to 96 KB |

| Register File Size / SM | 256 KB | 256 KB | 256 KB | 256KB |

| Register File Size / GPU | 3840 KB | 6144 KB | 14336 KB | 20480 KB |

| TDP | 235 Watts | 250 Watts | 300 Watts | 300 Watts |

| Transistors | 7.1 billion | 8 billion | 15.3 billion | 21.1 billion |

| GPU Die Size | 551 mm² | 601 mm² | 610 mm² | 815 mm² |

| Manufacturing Process | 28 nm | 28 nm | 16 nm FinFET+ | 12 nm FFN |

Now slightly more detail, a fully enabled GV100 GPU actually consists of six GPCs, 84 Volta SMs, 42 TPCs (each including two SMs), and eight 512-bit memory controllers (4096 bits total). Each SM has 64 FP32 Cores, 64 INT32 Cores, 32 FP64 Cores, and 8 new Tensor Cores. Each SM also includes four texture units.

With 84 SMs, a full GV100 GPU has a total of 5376 FP32 cores, 5376 INT32 cores, 2688 FP64 cores, 672 Tensor Cores, and 336 texture units. Each memory controller is attached to 768 KB of L2 cache, and each HBM2 DRAM stack is controlled by a pair of memory controllers. The full GV100 GPU includes a total of 6144 KB of L2 cache. The figure in above table shows a full GV100 GPU with 84 SMs (different products can use different configurations of GV100). The Tesla V100 accelerator uses 80 SMs.

Source: Guru3d